Building a Party Registration Page with Serverless and MongoDB

Questo file e stato tradotto automaticamente dall'IA, potrebbero verificarsi errori

Every year my wife and I throw a big costume summer party. Around 50 of our friends show up in costumes, we have games, a quiz, food, and a lot of fun. But, every year it's the same: tracking invites, tracking RSVPs, sending out the same information again and again. I tried solving it with a Facebook event, but some people refuse to use Facebook, some only RSVP for themselves leaving me wondering "Is your partner also coming?"

So this year the builder in me woke up and I built a web app for it.

Guests get a personal code, log in, RSVP, check the dress code. I get an admin dashboard where I can see who's coming, who's ignoring me, track activity, and see what dietary restrictions I need to deal with when I'm doing the grocery run.

It started as a fun weekend project. But the more I built, the more I realized this could work for basically any event. The architecture stays the same whether it's 50 guests or 500.

The code is available on Serverless Handbook.

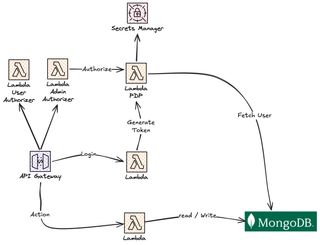

Architecture overview

So this is the basic architecture: serverless and a database, in this case MongoDB.

The frontend is a React 19 app built with Vite, hosted from S3 behind CloudFront. Tailwind for styling. Nothing unusual there either.

The backend is where it gets more interesting. Lambda functions behind API Gateway, with separate Lambda authorizers controlling access through the PDP/PEP pattern. I wrote about that pattern in detail in a previous post if you want the full picture.

For the database I went with MongoDB Atlas. More on that later, but in short I tried DynamoDB but got tired of so many different GSIs.

Authentication is self-signed JWTs, similar to my AI Bartender. The PDP (central auth service) signs tokens with a private key. The custom Lambda Authorizers in API Gateway (PEP) just fetch the public key and can validate the JWT. Simple, and hard to mess up.

Everything is defined in SAM templates and this time I deployed to eu-north-1 because I'm in Sweden and my guests are local.

Authentication to Atlas

Authentication towards Atlas could of course be done with traditional Username and Password. But in my previous post I introduced Outbound Identity Federation with MongoDB. And of course this is what I use.

User Authentication made easy

I didn't want my guests to have to register and set up a username and password. My guests are RSVPing for a garden party, not signing up for a SaaS product. So I wanted something simple, in the end I opted for using a personal token paired with their last name.

Each guest gets a code that I generate in the admin dashboard. They type in their code and last name. That's the whole login.

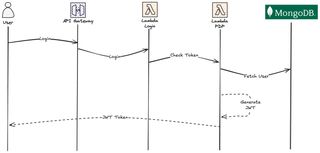

So this is the guest login flow.

- Frontend (User) sends the code and last name to

POST /auth/login - API Gateway routes to a Login Proxy Lambda

- That Lambda calls the PDP Lambda directly (Lambda-to-Lambda)

- PDP looks up the token in MongoDB, checks the last name

- If it matches, signs a JWT with the RSA private key

- Frontend stores the JWT, sends it as a Bearer token on every request after that

The JWT contains the guest's ID, name, and whether they're an admin. Signed with RS256, so the PDP holds the private key and everything else just needs the public key. No shared secrets.

Now, the Lambda-to-Lambda call when Login calls the PDP, this is far from best practice, sometimes it's OK, and in this small simple solution I opted for that. In a production setup my PDP would be behind an API Gateway, no Lambda-to-Lambda in that case.

I said this solution could be used for any event of any size, and we would replace our small login flow with a proper signup using Cognito User Pools, Okta, Auth0 or similar. Add Stripe Checkout in front of that and we get paid ticketing as well.

Why MongoDB and Not DynamoDB

In my first version I used DynamoDB, it worked fine for 50 guests. Then I started to add functionality, possibility to connect guests, activity tracking and more. I added some more indexes but still ended up with several scans.

As an example in the admin dashboard I list all guests. With DynamoDB that resulted in a Scan. Sure, I could probably add an index where the Partition Key would be a fake party name and the guest ID as Sort Key. But that was not what I wanted.

Then came filtering like Show me everyone who hasn't RSVP'd. This was one more scan and filter in your code, or I could build a GSI again, for every query pattern I now could think about.

So I started thinking, but what if an event has 500, 1000, or 10,000 guests, keeping doing scans would not be that great.

And then came relationships. My party has couples and families. John and Jane are a pair, they share an RSVP. I modeled this in DynamoDB with a connectedTo field, a bidirectional link between records. Fine for two people. But a family of four? A group of friends? Connecting two guests means updating both records. Disconnecting means finding the other person and updating both again. It got messy fast.

Aggregation..... How many total guests are coming? scan everything into the Lambda function, loop through it, sum it up.

It more felt like I needed a Relational Database where I could do queries, sort, filter, and aggregation. At the same time, I needed the possibility for a dynamic number of columns (keys) per guest, which is one of DynamoDB's big powers.

This is where I decided to move to MongoDB Atlas and get the best out of both worlds.

The MongoDB Data Model

I decided to go with one collection: one document per guest. No joins and no references to chase.

{

"_id": ObjectId("..."),

"firstName": "John",

"lastName": "Doe",

"token": "vTFSxGE3",

"status": "pending",

"numGuests": 0,

"dietary": "",

"expectedGuests": 1,

"isAdmin": false,

"groupId": "doe-family",

"rsvpDate": null,

"lastLogin": null,

"lastAccess": null,

"createdAt": ISODate("2026-04-01T18:00:00Z")

}The interesting field is groupId. That's the whole relationship model, this is how couples and groups are now connected.

Groups Instead of Pairs

In DynamoDB I had connectedTo: "jane-uuid" on John's record and connectedTo: "john-uuid" on Jane's. Bidirectional links. Works for two people but not for groups.

The MongoDB version is simpler. Everyone in a family or group shares the same groupId. No updating two records just to connect people.

For a couple it would be:

{ firstName: "John", groupId: "doe-family", expectedGuests: 1 }

{ firstName: "Jane", groupId: "doe-family", expectedGuests: 1 }And for a family of 4 it's just the same:

{ firstName: "Bob", groupId: "smith-family", expectedGuests: 1 }

{ firstName: "Alice", groupId: "smith-family", expectedGuests: 1 }

{ firstName: "Tom", groupId: "smith-family", expectedGuests: 1 }

{ firstName: "Emma", groupId: "smith-family", expectedGuests: 1 }But what is that expectedGuests and what does that bring and how does it work? Each member's expectedGuests includes themselves plus anyone they're bringing who doesn't have their own login. For example in a family with young kids the kids would not get their own login, instead the expectedGuests on the parents would be > 1.

If I now want to add someone to the groups, it's one update of that person's document.

db.guests.update_one(

{"_id": new_member_id},

{"$set": {"groupId": existing_group_id}}

)Removing someone? Same thing, one update.

db.guests.update_one(

{"_id": member_id},

{"$set": {"groupId": None}}

)Compare that to the DynamoDB version where connecting two guests meant updating both records, checking neither was already connected to someone else, and handling the error cases for both writes.

Indexes

Moving to MongoDB doesn't mean I can skip indexes. I still need them, same as I would with a relational database such as PostgreSQL or MySQL. But a MongoDB index (or a PostgreSQL index) and a DynamoDB GSI are not the same thing. They share a name and that's about it.

An index in MongoDB or PostgreSQL is a lightweight data structure. A pointer, it basically says "if you're looking for documents where status is pending, here's where they are." The original data stays where it is. The index just makes lookups fast. I can create one on any field at anytime, and it only costs me a bit of storage and some write overhead.

A GSI in DynamoDB, that is a full copy of the data. When I create a GSI, DynamoDB duplicates every item, or the attributes I decide to project, into a separate structure with its own Partition Key and Sort Key. I would pay for storage on both copies. I pay for write capacity on both.

That difference matters a lot. In MongoDB, adding an index on status costs almost nothing and I can query it any way I want, equals, not-equals, ranges, regex, whatever. In DynamoDB, adding a GSI means deciding upfront which attribute is the partition key. Every new access pattern potentially means a new GSI with its own cost and eventual consistency tradeoffs.

So when I say my MongoDB indexes are more flexible, that is what I mean.

So I got four indexes. Each one exists because of a real query that the app runs.

# Login lookup. Every login hits this. Must be unique.

guests.create_index("token", unique=True)

# Group lookup. Every RSVP fetches all group members.

guests.create_index("groupId", sparse=True)

# Status filter. Admin dashboard filters by pending/coming/declined.

guests.create_index("status")

# Compound: status + dietary. Allergy report for coming guests.

guests.create_index([("status", 1), ("dietary", 1)])The sparse: True on groupId means solo guests with groupId: null are not in the index. It saves some tiny space, but doesn't affect queries performance since I only search by groupId when I know it exists, I have no need to find any guest without a connection, not now anyway.

Settings

I need to set a RSVP deadline, so if any guest tries to RSVP or change their RSVP after that date, they actually must call or text me directly. After a certain date I don't want to keep checking RSVP changes, as it's too close to the actual party date.

In DynamoDB I stored the RSVP deadline in the same table as the guests, using the single table design.

In MongoDB I decided to give settings their own collection:

db.settings.find_one({"_id": "app-settings"}){

"_id": "app-settings",

"rsvpDeadline": ISODate("YYYY-MM-DDT23:59:59Z"),

"eventName": "Jimmy's Party!",

"eventDate": ISODate("YYYY-MM-DDT16:00:00Z")

}The RSVP Flow

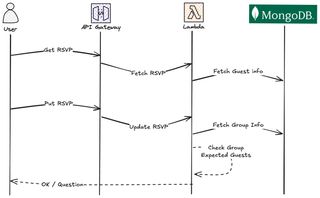

This is where the group model really pays off and I can build some great logic.

So this is the guest RSVP flow.

When someone opens the RSVP page, the app first gets their record and everyone in their group, and calculates the group expected count.

guest = db.guests.find_one({"_id": guest_id})

group_members = []

family_sum = guest["expectedGuests"]

if guest.get("groupId"):

group_members = list(db.guests.find({

"groupId": guest["groupId"],

"_id": {"$ne": guest["_id"]}

}))

family_sum += sum(m["expectedGuests"] for m in group_members)The frontend shows the expected guest count and their group members. When they submit, the app compares what they entered against the expected total.

If the numbers match, that's great everyone in the group is coming. One bulk update marks the whole group as coming.

If the guest enters fewer people than expected, a dialog asks their partner, or who in the group, that is not coming. Expected 2 but you said 1. Is Lisa not coming? And if they say more than expected, the app double-checks Expected 2 but you said 3. Sure about that?

The bulk update is one line.

db.guests.update_many(

{"groupId": guest["groupId"], "_id": {"$ne": guest_id}},

{"$set": {"status": "coming", "numGuests": 0, "rsvpDate": datetime.utcnow()}}

)The Admin Dashboard

This is where I spend my time in the app, checking who is coming and who has not even logged in yet. The closer to the party date we come the more time I spend checking this page. I probably need to build a notification system that does this for me.

The overview gives me the numbers I care about, invited guests, total guests coming, allergies. All from a single database aggregation.

stats = db.guests.aggregate([

{"$group": {

"_id": None,

"totalInvited": {"$sum": 1},

"totalComing": {"$sum": {"$cond": [{"$eq": ["$status", "coming"]}, 1, 0]}},

"totalGuests": {"$sum": {"$cond": [{"$eq": ["$status", "coming"]}, "$numGuests", 0]}},

"totalExpected": {"$sum": "$expectedGuests"},

"withAllergies": {"$sum": {"$cond": [

{"$and": [{"$eq": ["$status", "coming"]}, {"$ne": ["$dietary", ""]}]},

1, 0

]}},

}}

])Then there is the guest list page, this is where I add people, generate tokens, set expected guest count, connect to a group and more.

The admin part also consists of a summary of people that have RSVPed and a summary of all food preferences and allergies. Not that interesting just as above a straight forward aggregation query towards MongoDB.

Activity Tracking

I decided that I need to track if my guests have even logged in and when they accessed the page last time. I did that so when the party is closing in I can see if people that have not RSVPed at least have logged in once. So I added lastLogin and lastAccess timestamps. Writing to the database in a synchronous way on every single API call felt like added latency.

I therefore added an SQS queue where a separate Lambda function can batch operations towards the database. Certain events in the page adds a message to a queue. If the SQS publish fails the API call still works. The tracking is best-effort, which is all I need for "did they at least open the page."

Authorization with PDP and PEP

I covered the PDP/PEP pattern and JWT signing earlier. Here's how the two authorizers map to the API endpoints:

GET /rsvp,PUT /rsvpuse the guest authorizer (any valid JWT passes)GET /admin/guests,POST /admin/guests, etc. use the admin authorizer (checksisAdminis true)POST /auth/loginuses no authorizer (public)

Scaling This for Real Events

This works for my 50 person garden party. But, could it scale to a 10,000 person conference? Honestly, yes it could, serverless would scale great and the MongoDB data model feels solid. Of course there would be a need for some tweaks but most of the work is already done.

More guests just means more documents in MongoDB. The indexes already cover the query patterns, and at this scale skip and limit pagination is fine. If I need public registration instead of invite codes, swap in Cognito User Pools, the authorizers don't really care, it will just check the JWT.

For paid events, I would put Stripe Checkout in front of the signup. A Webhook fires on payment, backend creates the guest record, sends a confirmation, the RSVP page becomes a ticket page.

Turning this into a SaaS solution so we can handle multiple tenants and events, I would add a tenant ID and an event ID to the data model. Change the queries a bit and add some extra functionality, but most of the logic is there and is done.

Final Words

A small hobby project that started as "I don't want to chase RSVPs in group chats" turned into the foundation for a full serverless event platform. MongoDB handles the data, Lambda keeps the backend serverless, and the PDP/PEP pattern gives me the authorization I need.

The code is available on Serverless Handbook.

Check out my other posts on jimmydqv.com and follow me on LinkedIn for more serverless content.

Now Go Build!